How Large Language Models Work: Inside ChatGPT, Claude, and Gemini

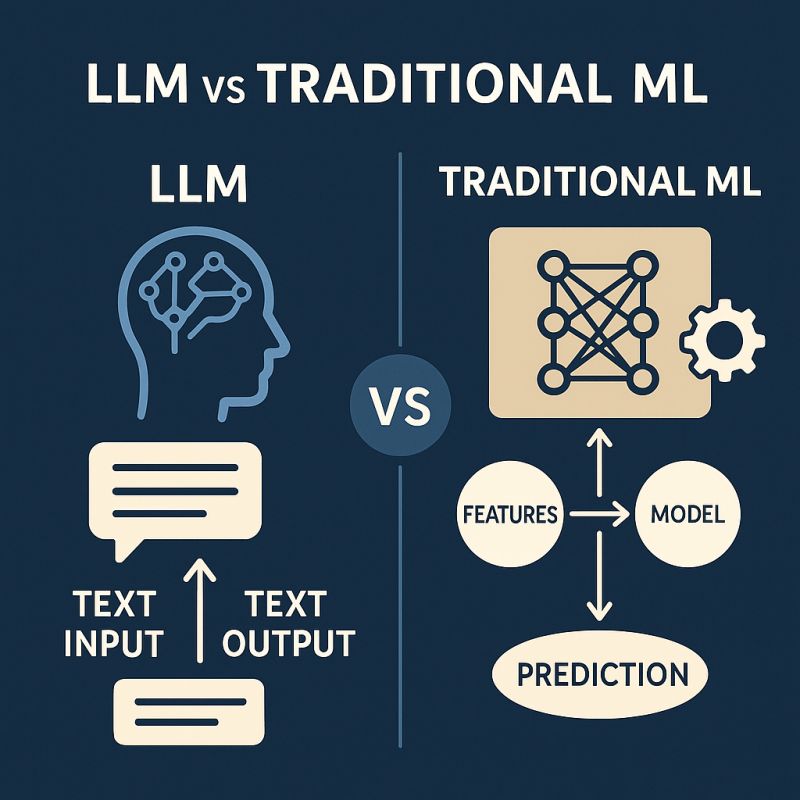

Large Language Models (LLMs) are the technology behind many modern AI assistants. Tools like ChatGPT, Claude, and Gemini can write essays, generate code, answer questions, and even hold conversations that feel surprisingly natural.

But how do these systems actually generate text?

Let’s break down the core ideas that power Large Language Models.

1. Tokens: The Building Blocks of Language

Before an AI model understands text, it first breaks it into smaller pieces called tokens.

A token can be:

A word

Part of a word

A punctuation mark

For example, the sentence:

AI is transforming technology.

might be split into tokens like:

AI | is | transform | ing | technology | .

Each token is then converted into a number so the model can process it mathematically.

Large language models don’t read sentences the way humans do. Instead, they process sequences of tokens and learn relationships between them.

2. Training Data: Learning from Massive Text Collections

To generate meaningful responses, language models are trained on extremely large datasets. These datasets include publicly available text such as:

Books

Articles

Websites

Forums

Documentation

Code repositories

During training, the model reads billions or even trillions of tokens.

It does not memorize documents in the way humans remember things. Instead, it learns patterns in language such as grammar, context, tone, and relationships between words.

For example, after seeing millions of sentences, the model learns patterns like:

Paris → often followed by → France

doctor → related to → hospital

code → often paired with → programming languages

This pattern recognition is what allows the model to produce coherent responses.

3. Transformers: The Architecture That Made It Possible

The major breakthrough behind modern LLMs is the Transformer architecture, introduced in the research paper Attention Is All You Need.

Transformers allow models to understand context across an entire sentence or paragraph rather than just reading word by word.

A key idea inside transformers is something called attention.

Attention helps the model determine which words in a sentence are most important when predicting the next word.

For example:

“The trophy didn’t fit in the suitcase because it was too big.”

Here, the word “it” refers to the trophy. A transformer model uses attention mechanisms to understand this relationship.

This ability to capture long-range context is what makes transformer models powerful for tasks like writing, summarizing, translating, and coding.

4. Probability-Based Predictions: The Core Mechanism

At its core, a language model generates text using probability.

The model predicts the most likely next token based on the tokens that came before.

Imagine the sentence:

“The capital of France is”

The model calculates probabilities for possible next tokens:

Paris → 92%

London → 3%

Berlin → 2%

Madrid → 1%

Because “Paris” has the highest probability, the model selects it.

Text generation works by repeating this process again and again:

Read the existing tokens

Predict probabilities for the next token

Choose one token

Add it to the sequence

Repeat

This loop continues until the response is complete.

5. Why Responses Feel Intelligent

Even though the core process is probability-based, the massive scale of training data and advanced transformer architecture allow these models to generate responses that appear thoughtful and intelligent.

Models like ChatGPT, Claude, and Gemini are trained on enormous datasets and contain billions of parameters that capture complex relationships between words, ideas, and contexts.

This enables them to:

Write essays and articles

Generate programming code

Summarize documents

Translate languages

Answer complex questions

Final Thoughts

Large Language Models may appear magical, but their core mechanism is surprisingly simple: predicting the next token based on probability.

What makes them powerful is the scale of data, the transformer architecture, and the ability to model complex relationships in language.

As these systems continue to evolve, they are rapidly becoming one of the most important technologies shaping the future of communication, software development, and knowledge sharing.