LLM vs Traditional Machine Learning: Understanding the Key Differences

Artificial Intelligence has evolved rapidly over the past decade. One of the biggest advancements in recent years is the emergence of Large Language Models (LLMs). These models power many modern AI systems capable of generating text, answering questions, writing code, and even assisting in research.

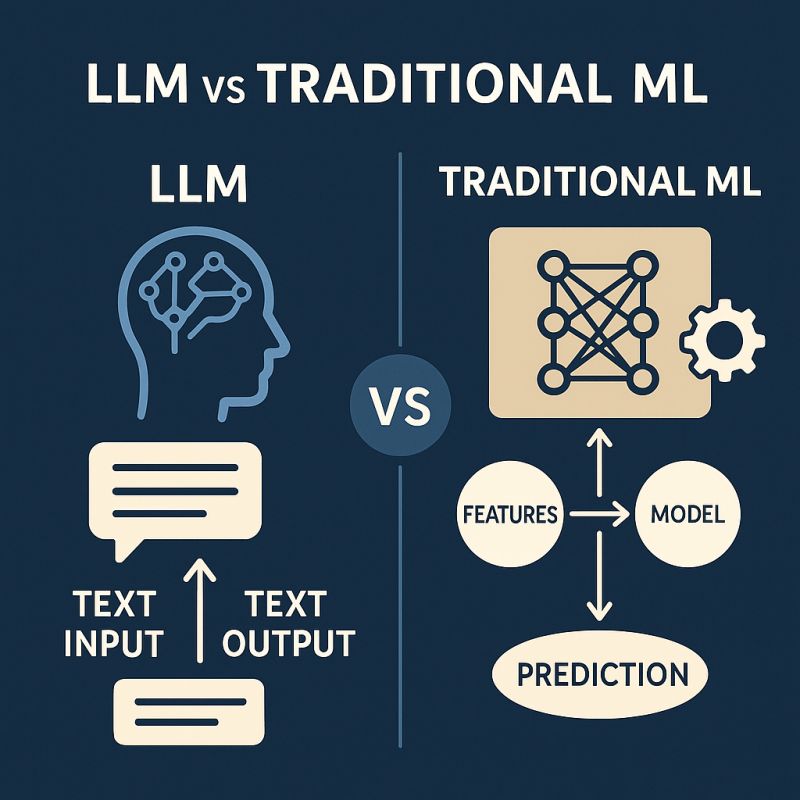

However, before LLMs became popular, most AI systems relied on traditional machine learning (ML) algorithms such as Logistic Regression, Decision Trees, Support Vector Machines, and Random Forests. While both approaches fall under the broader field of machine learning, they differ significantly in architecture, training methods, and use cases.

This article explores the key differences between LLMs and traditional machine learning, and when each approach should be used.

What Are Large Language Models (LLMs)?Large Language Models are deep learning models designed to understand and generate human language. They are typically built using transformer architectures and trained on massive datasets containing text from books, websites, articles, and other sources.

LLMs learn language patterns, grammar, context, and relationships between words. Because of their scale and training data, they can perform a wide range of tasks without needing task-specific training.

Common capabilities of LLMs include:

Text generation

Question answering

Code generation

Translation

Summarization

Conversational AI

LLMs are usually trained with billions or even trillions of parameters, making them extremely powerful but also computationally expensive.

What Is Traditional Machine Learning?

Traditional machine learning refers to earlier methods that rely on structured data and well-defined algorithms. These models learn patterns from labeled datasets and make predictions based on input features.

Examples of traditional ML algorithms include:

Logistic Regression

Decision Trees

Random Forests

Support Vector Machines (SVM)

K-Nearest Neighbors

Unlike LLMs, traditional ML models are usually task-specific. For example, a model trained to detect spam emails cannot automatically summarize a document or answer questions.

These models often require feature engineering, where developers manually select and preprocess relevant input features to improve performance.

Advantages of LLMs

Versatility – One model can perform multiple tasks.

Minimal feature engineering – The model learns features automatically.

Natural language interaction – Users can interact with AI using plain language.

Generalization – Can handle tasks they were not explicitly trained for.

Limitations of LLMs

Despite their power, LLMs have several challenges:

High computational cost

Risk of generating incorrect information (hallucinations)

Difficult to interpret decisions

Require large infrastructure to deploy

Advantages of Traditional Machine Learning

Traditional ML remains valuable because it offers:

Lower computational requirements

Faster training times

Better interpretability

Strong performance on structured datasets

For many business problems, a well-designed traditional ML model may outperform a large language model.

Conclusion

Large Language Models represent a major breakthrough in artificial intelligence, particularly for natural language processing tasks. Their ability to understand and generate human-like text has opened new possibilities for applications ranging from chatbots to coding assistants. However, traditional machine learning techniques remain highly relevant. They are efficient, interpretable, and often more practical for structured data problems. Rather than replacing traditional ML, LLMs should be viewed as a complementary technology. The future of AI will likely combine both approaches to build smarter, more efficient systems.